BLOG

AI Best Practices

Solutions

Harness Engineering: How to Build Reliable AI Agents by Engineering the System, Not the Model

Agent reliability doesn't come from picking the right model, it comes from engineering the system around it. Learn what harness engineering is, how to classify and fix agent failures, and how to build production-grade agent harnesses with Haystack.

Published on

April 23, 2026

12

min read

TLDR

Key Metrics:

Harness engineering is the discipline of designing the systems, constraints, and feedback loops that wrap around an AI model to make it reliable in production. The system itself, the agent harness is everything except the model: tools, memory, guardrails, verification, and orchestration. If you're building AI agents and wondering why swapping models isn't fixing your reliability problems, the harness is likely the missing piece.

Most AI engineering teams in 2026 are stuck chasing model upgrades. They swap one frontier model for another, hoping that this time the agent won't get stuck in a recursive loop or hallucinate a response that violates corporate compliance. But the evidence points in a different direction. One team moved a coding agent from the bottom 30 to the top 5 on Terminal Bench 2.0 — same model, same weights, zero retraining. The only thing that changed was the system around the model.

That system is the harness, and the formula is simple: Agent = Model + Harness. The model provides intelligence. The harness provides everything else: tools, memory, constraints, verification, and orchestration. This post explains what an agent harness is, why it matters more than your model choice, and how to engineer one for your use case.

.png)

What Is an Agent Harness?

An agent harness is everything in an AI agent system except the model itself. The model provides raw reasoning capability. The harness provides the infrastructure that makes that reasoning useful: the tools, memory, constraints, verification checks, and orchestration logic that turn a single powerful prediction engine into a system that can actually do reliable work over time.

The term was popularized by Mitchell Hashimoto (co-founder of HashiCorp, creator of Terraform) in a February 2026 blog post describing his AI coding workflow. His core insight resonated because it reframed how teams should think about agent failures: every time an agent makes a mistake, don't just hope it does better next time. Engineer the environment so that specific mistake becomes structurally harder to repeat.

Days later, OpenAI published a detailed account of an engineering team that had built a million-line codebase with no manually written code. Their job wasn't writing code. It was designing the environment: architectural constraints enforced by linters, a structured documentation directory as the single source of truth, and feedback loops that caught problems before they compounded. The model was capable. The harness made it productive.

The question, then, is what a well-designed harness actually looks like and the answer becomes clearest when you look at the specific problems it needs to solve.

Why Does the Harness Matter More Than the Model?

Raw models have specific, documented limitations that no amount of prompting can fully solve. Understanding these limitations is what motivates every component of the harness.

Context rot is the first. Models have a shelf life during a conversation. As the context window fills up, their ability to follow instructions degrades — they lose track of constraints, forget earlier objectives, and start producing output that drifts from the original task. A well-engineered harness solves this by externalizing memory. Instead of cramming 100,000 tokens of history into the model, it uses state persistence — progress files, structured logs, git history — to keep the agent grounded without bloating its context window. This is what gives an agent continuity: not a bigger context window, but a smarter one.

No cross-session memory is the second. Every new session starts blind. The agent forgets everything it accomplished previously such asthe decisions it made, the files it modified, the conventions it learned about your codebase. Without a harness that persists state across sessions, your agent is perpetually a new hire on their first day. The fix is the same infrastructure: external memory systems that the harness loads at the start of each session, giving the agent a running start instead of a cold start.

No self-verification is the third. Models don't flag their own uncertainty. They produce confident output whether it's correct or not - and a 10-step process with 99% per-step accuracy still yields only about 90% end-to-end success. In practice, per-step accuracy is often much lower. Without verification loops built into the harness, including test suites, format validators or a second model acting as a reviewer, those mistakes propagate silently through every downstream step. The harness catches what the model can't catch about itself.

The evidence for harness-first engineering is empirical, not theoretical. The Terminal Bench 2.0 results showed that harness-only changes moved agents by 20+ ranking positions. Separately, analyses have found that the same model running inside different harnesses can produce wildly different performance — not because the model changed, but because the surrounding infrastructure did.

How Is Harness Engineering Different from Context Engineering?

Context engineering and harness engineering aren't competing disciplines, they're nested. Context engineering manages what the model sees at any given moment: which documents get retrieved, how conversation history is assembled, what tool definitions are in scope. Harness engineering encompasses all of that, plus how the system operates over time — the tools, permissions, state persistence, verification loops, and failure handling that keep the agent on track across steps and sessions. Getting the context right without a harness gives you a model that reasons well in isolation but drifts over real tasks. Building a harness without good context gives you solid infrastructure feeding the model garbage. You need both.

How Do You Make AI Agents Reliable in Production?

Most teams try to make agents reliable by adding more components: better retrieval, more tools, tighter prompts. But reliability doesn't come from any single component. It comes from a systematic process for finding and fixing the specific ways your agent fails.

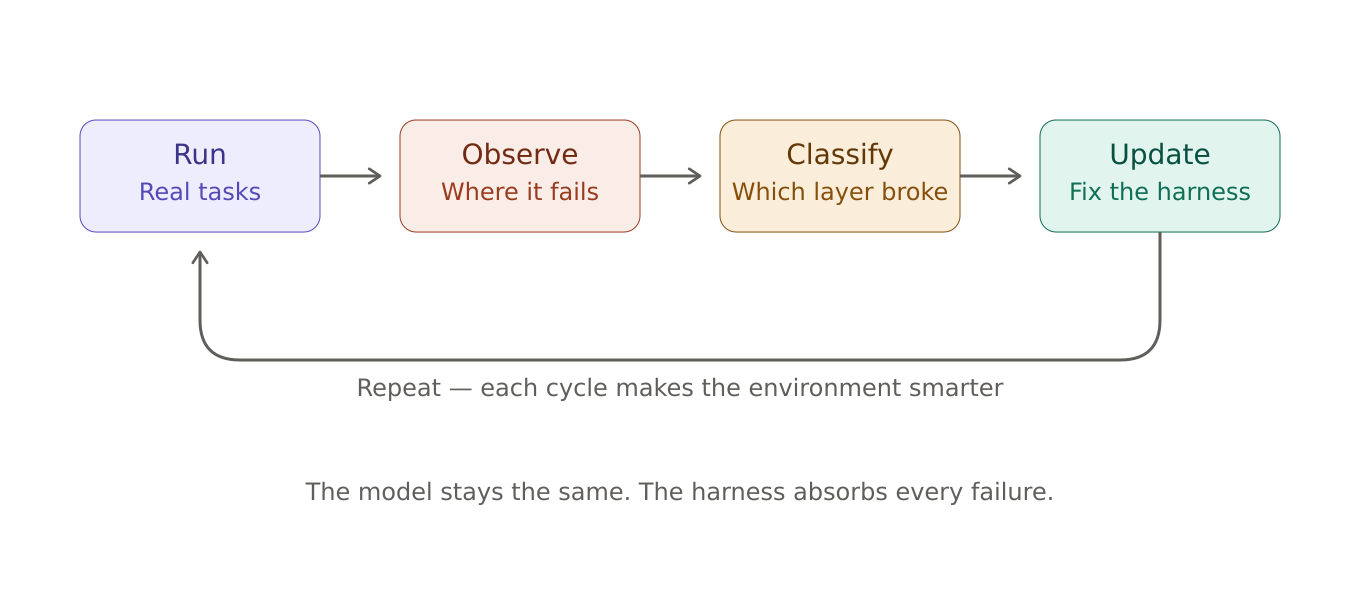

The core practice of harness engineering is an iterative loop: run the agent on real tasks, observe where it fails, classify the failure, update the harness, and repeat. Every cycle makes the environment smarter, even when the model stays the same. This is the insight that made Hashimoto's framing stick — you're not debugging the model, you're debugging the environment.

Classify Failures, Then Fix the Right Layer

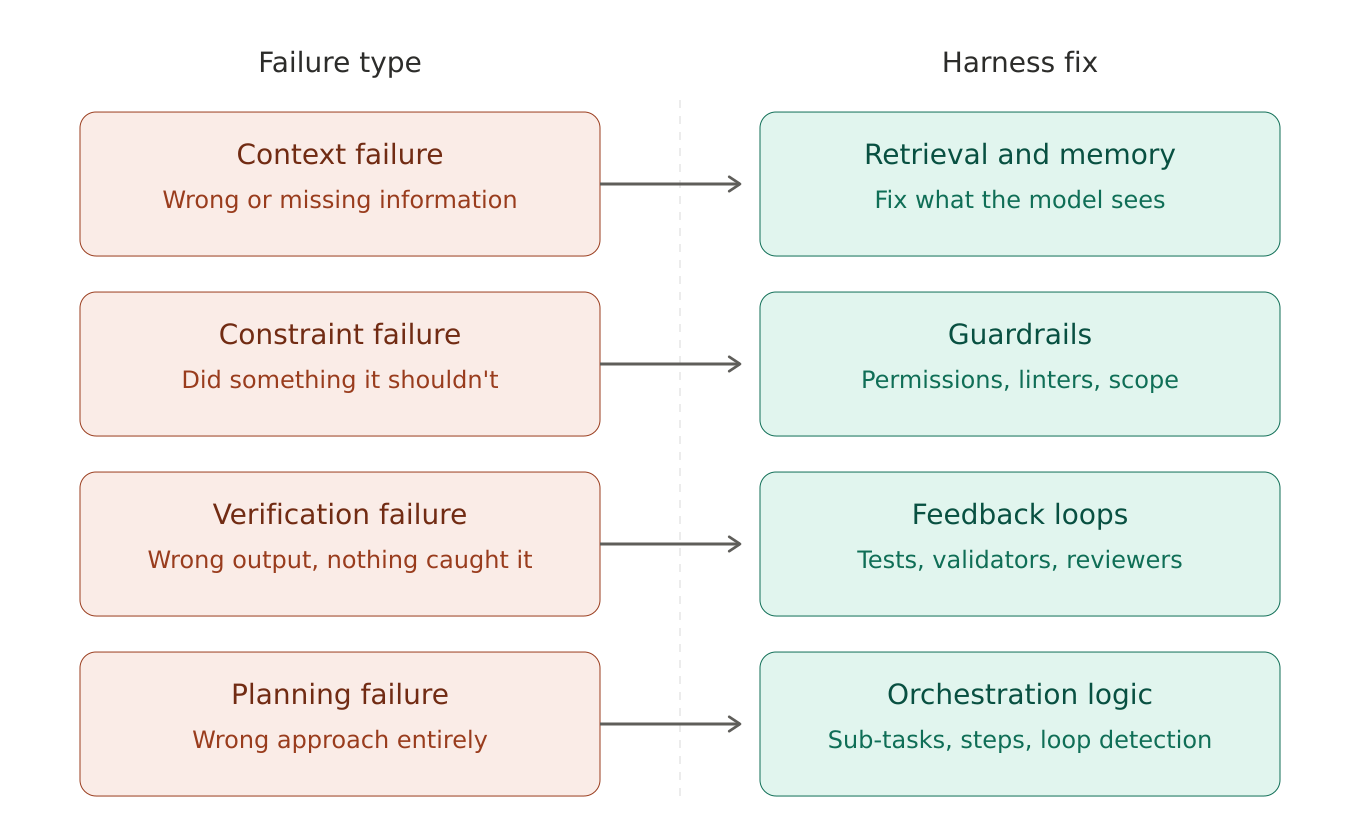

Not all agent failures are the same, and misdiagnosing the type leads to wasted effort. When an agent produces a bad result, ask which layer broke:

- A context failure means the agent didn't have the right information at the right time. It hallucinated a database schema because it wasn't provided one, or it lost track of the objective because the conversation history overflowed its context window. The fix lives in your context engineering: retrieval logic, memory management, or how you structure what the model sees at each step.

- A constraint failure means the agent had the information but did something it shouldn't have. It rewrote files outside its scope, ignored architectural boundaries, or called a tool it didn't need. The fix is a guardrail — a permission boundary, a linter rule, a scope limit that makes the bad action structurally impossible next time.

- A verification failure means the agent produced output that looked plausible but was wrong, and nothing caught it. The fix is a feedback loop — a test suite, a format validator, or a second model acting as a reviewer that runs before the output is finalized.

- A planning failure means the agent took the wrong approach entirely. It tried to solve the problem in one step when it needed five, or it went down a dead-end path and looped on the same broken strategy. The fix is in your orchestration logic; breaking the task into smaller steps, adding sub-agent delegation, or introducing loop detection that nudges the agent to reconsider its approach after repeated failed attempts.

This classification turns vague "the agent messed up" conversations into targeted harness updates. Over time, each fix accumulates — the harness absorbs the failure and prevents it from recurring.

Route Different Tasks to Different Models

Not every step in an agent workflow needs the same model. A high-reasoning model can handle planning and complex decision-making, while a smaller, faster model handles repetitive verification or data extraction. AI orchestration platforms like Haystack already support this kind of multi-model routing, letting teams assign models per pipeline step based on cost, latency, and capability requirements. As the cost gap between reasoning tiers widens, this pattern is quickly becoming standard practice rather than an optimization experiment.

Isolate Sub-Tasks to Protect the Main Context

For long-horizon tasks, sub-agents are one of the most powerful tools for maintaining coherence. The parent agent delegates a specific sub-task to a sub-agent running in its own isolated context window. The sub-agent does the work such as research, implementation, data transformation, and returns only the final result. None of the intermediate tool calls, failed attempts, or reasoning noise ends up in the parent's context. This keeps the main orchestration thread clean and focused, directly addressing the context rot problem described above and dramatically extending how long an agent can operate before performance degrades.

Constrain More, Not Less

This is counterintuitive, but well-supported by production experience. Limiting what an agent can touch in a single task — which files it can modify, which tools it can access, which directories it can write to — doesn't reduce its effectiveness. It focuses it. A well-constrained agent produces higher-quality output precisely because it can't wander into territory that creates downstream problems. Start restrictive and loosen as you gain confidence. It's far easier to remove guardrails from a working system than to retroactively add them to a fragile one.

Instrument Everything

A harness without observability is a harness you can't improve. Log agent actions, tool calls, token usage, and decision points. When the agent fails, these traces are what let you classify the failure and make the right harness update. Even simple file-based logging is enough to start. The goal isn't a perfect monitoring dashboard on day one — it's having the data to run the next iteration of the improvement loop.

Treat the Harness as a Living System

The improvement loop never truly ends. Run the agent on real tasks. Analyze the traces. Classify the failures. Update the harness. Repeat. Each cycle focuses on the mistakes from the previous run and engineers them out of the environment. Teams that adopt this practice consistently report that harness iteration delivers larger reliability gains than model upgrades, at a fraction of the cost.

How Do You Build Agent Harnesses and Context Engineering Pipelines with Haystack?

You don't need a massive R&D budget to start architecting your harness and context pipelines. The shift is iterative. Start with the failure types your agent hits most, build the components that address them, and refine the context your agent sees at each step. The Haystack Enterprise Platform is built for exactly this kind of work. As an open-source AI orchestration framework, it gives you modular control over every layer: retrieval, routing, memory, tools, guardrails, and generation, without locking you into a single model or vendor.

Here's how to start applying these practices today:

- Design Modular Pipelines, Not Monolithic Prompts. The biggest mistake teams make is stuffing all their logic into a single prompt and hoping the model figures it out. Haystack structures agent workflows as explicit, composable pipelines where each component, including retrievers, routers, generators, tool invokers and memory layers, can be tested, swapped, and improved independently. Add branches, loops, and conditional logic to control exactly how context flows through your system. When something breaks, you can isolate the failing component instead of debugging a black box.

- Route Models Per Step. Haystack is model- and vendor-agnostic by design. It integrates with OpenAI, Anthropic, Mistral, Cohere, Hugging Face, Azure, AWS Bedrock, and local models — and you can assign different models to different pipeline steps without rewriting your system. Use a high-reasoning model for planning, a smaller model for verification, and a fast local model for data extraction. This is multi-model routing as a first-class architectural pattern, not an afterthought.

- Isolate Sub-Tasks with Multi-Agent Orchestration. Haystack supports using agents as tools for other agents. A main orchestrator agent delegates sub-tasks; research, implementation and content generation to specialized sub-agents that operate in isolated contexts. The parent only sees the final result, not the intermediate noise. This keeps your main reasoning chain clean and dramatically extends how long your agent can operate before context rot degrades performance.

- Integrate Verification Into the Pipeline. Don't trust agent output by default. Haystack's composable architecture lets you wire evaluation and validation steps directly into the pipeline — linters, format validators, schema checks, or an LLM-as-judge auditor that reviews output before it's delivered. These aren't bolted-on afterthoughts; they're components in the same graph, running as part of every execution.

- Connect to Any Data Source via MCP. Haystack has native MCP support on both sides. With Hayhooks, you can expose any Haystack pipeline or agent as an MCP tool with a single command — making it available to AI dev environments like Cursor or Claude Desktop. Going the other direction, MCPToolset lets your agents connect to any MCP-compliant server and automatically load its tools, plugging into external data sources, APIs, and services without custom integration code.

- Deploy with Governance. Moving from prototype to production is where most agent projects stall. Haystack pipelines are fully serializable and cloud-agnostic: deploy on any cloud or on-premise with built-in logging, monitoring, and tracing. The Haystack Enterprise Platform adds governance, access controls, observability, and collaboration tooling for teams that need to operate harnesses at scale under compliance requirements.

What's Next for Harness Engineering?

As we look toward 2027, the discipline is moving from static scaffolding to dynamic governance.

Self-Analyzing Harnesses: Emerging tools now use AI to optimize AI. AutoAgent, an open-source framework released in April 2026, uses a meta-agent that reads a task agent's execution traces, identifies recurring failure patterns, rewrites the harness, and benchmarks the new version - looping thousands of times without human intervention. Backed by the Meta-Harness paper from Stanford and MIT (March 2026), the approach hit first place on SpreadsheetBench and the top GPT-5 score on TerminalBench, beating every hand-engineered entry on both leaderboards. The harness is starting to engineer itself.

Continual Learning Primitives: Instead of starting blind each session, harnesses are beginning to implement long-term memory that persists across weeks and months of operation. Agents onboard to a project by reading their own previous execution logs, progress files, and git history - collapsing ramp-up time from days to seconds. Research has shown agents autonomously developing persistent memory infrastructure for tracking what worked across iterations, even when that behavior wasn't explicitly programmed.

Standardized Agent Protocols: With the rise of the Model Context Protocol (MCP), introduced by Anthropic in late 2024 and now adopted by OpenAI, Google DeepMind, and thousands of third-party servers, harnesses are becoming increasingly interoperable. A harness built in Haystack can plug into any MCP-compliant data source or toolset without custom integration code, making the tool layer a commodity rather than a bottleneck.

Conclusion

The future of AI engineering isn't about finding the perfect model. It's about designing environments, specifying intent, and building the feedback loops that allow agents to do reliable work.

The role is shifting. AI engineers are becoming environment designers, using context engineering and agent harnesses at scale to make models productive rather than hoping raw capability is enough. Whether you're automating enterprise workflows or building knowledge discovery tools, the leverage is in the system around the model, not the model itself.

Start with the improvement loop. Classify your failures. That's where the work is and where the results come from.

If you're looking to operationalize these ideas, Haystack provides a flexible, open framework for building context-aware LLM applications and agents. From modular pipelines to advanced retrieval, memory, and orchestration layers, Haystack is designed to support every layer of context engineering and agent harness development - whether you're integrating private knowledge bases, designing tool-using agents, or deploying scalable AI services in production.

{{cta-light}}

Curious about building AI Apps and Agents?

Table of Contents